基于URL异常检测的机器学习模型mini部署

0x01 模型训练

训练集:正常的url训练集、异常的url训练集

训练思路:利用TF-IDF对url进行文本的向量化,利用逻辑回归算法进行训练

训练结果:二分类,1为正常,0为异常

代码:

from sklearn.feature_extraction.text import TfidfVectorizer

import os

from sklearn.cross_validation import train_test_split

from sklearn.linear_model import LogisticRegression

from sklearn import metrics

from sklearn import svm

import urlparse

def loadFile(name):

directory = str(os.getcwd())

filepath = os.path.join(directory, name)

with open(filepath,'r') as f:

data = f.readlines()

data = list(set(data))

result = []

for d in data:

d = str(urlparse.unquote(d)) #converting url encoded data to simple string

result.append(d)

return result

badQueries = loadFile('badqueries_1.txt')

validQueries = loadFile('goodqueries_1.txt')

badQueries = list(set(badQueries))

validQueries = list(set(validQueries))

allQueries = badQueries + validQueries

yBad = [1 for i in range(0, len(badQueries))] #labels, 1 for malicious and 0 for clean

yGood = [0 for i in range(0, len(validQueries))]

y = yBad + yGood

queries = allQueries

vectorizer = TfidfVectorizer(min_df = 0.0, analyzer="char", sublinear_tf=True, decode_error='ignore',ngram_range=(1,3)) #converting data to vectors

X = vectorizer.fit_transform(queries)

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42) #splitting data

badCount = len(badQueries)

validCount = len(validQueries)

lgs = LogisticRegression(class_weight={1: 2 * validCount / badCount, 0: 1.0}) # class_weight='balanced')

lgs.fit(X_train, y_train) #training our model

##############

# Evaluation #

##############

predicted = lgs.predict(X_test)

fpr, tpr, _ = metrics.roc_curve(y_test, (lgs.predict_proba(X_test)[:, 1]))

auc = metrics.auc(fpr, tpr)

print("Bad samples: %d" % badCount)

print("Good samples: %d" % validCount)

print("Baseline Constant negative: %.6f" % (validCount / (validCount + badCount)))

print("------------")

print("Accuracy: %f" % lgs.score(X_test, y_test)) #checking the accuracy

print("Precision: %f" % metrics.precision_score(y_test, predicted))

print("Recall: %f" % metrics.recall_score(y_test, predicted))

print("F1-Score: %f" % metrics.f1_score(y_test, predicted))

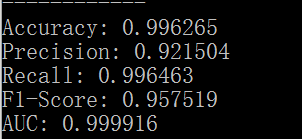

print("AUC: %f" % auc)本地加载了部分训练集数据试着跑了一下代码,得到模型的精确率、准确率、召回率、F1-score

0x02 小型化部署

离线跑的模型怎样进行上线部署是个问题。参考了相关机器学习模型部署的资料,选取了迷你的部署方法,直接利用python-httpserver进行部署,即django+gunicorn+nginx+机器学习模型。

django部分:首先需要将0x01中训练的模型导出,直接利用joblib dump即可

joblib.dump(lgs,'lr.sav')

joblib.dump(vectorizer,'l.sav')。之后django代码中导入之前导出的模型,利用该模型检测用户输入的待检测url

django的view.py代码为:

from django.http import HttpResponse

from django.shortcuts import render

from sklearn.externals import joblib

from sklearn.feature_extraction.text import TfidfVectorizer

def detect(request):

url_input=request.POST['url_input']

url_queries=[url_input]

voca=joblib.load('/root/ml/ml/l.sav').vocabulary_

vectorizer = TfidfVectorizer(min_df = 0.0, analyzer="char", sublinear_tf=True, decode_error='ignore',ngram_range=(1,3),vocabulary=voca) #converting data to vectors

Y=vectorizer.fit_transform(url_queries)

load_model=joblib.load('/root/ml/ml/lr.sav')

predicted=load_model.predict(Y)

for i in predicted:

if i==0:

message=url_input+' is '+'Normal'

else:

message=url_input+' is '+'Malicious'

context={'key':message}

return render(request,'display.html',context)

def searchform(request):

return render(request,'search_form.html')

def index(request):

return render(request,'index.html')接下来就是gunicorn+nginx实际部署django。nginx负责处理django的静态资源和代理http请求。这里需要注意的是django在开发环境下debug开启时,自带的server是会自动处理静态资源的,很方便,直接python manage.py runserver即可。但是在生产环境下,需要用nginx进行静态资源的处理。例如在setting.py中添加STATIC_ROOT='/var/www',通过python manage.py collectstatic搜集程序用到的所有静态资源放置于STATIC_ROOT目录下,再配置nginx。此处nginx.conf为

location /static/ {

root /var/www/;

}

nginx是用户访问的门户,只负责解析静态资源,动态的请求nginx会转发至gunicorn处理,此处nginx.conf为:

location / {

proxy_pass http://127.0.0.1:8080;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header Host $host;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

}其中http://127.0.0.1:8080是gunicorn服务开启的位置。gunicorn启动django服务,例如命令为sudo gunicorn -w 3 -b 0.0.0.0:8080 ml.wsgi:application,具体的参数可以自行设置,还可以添加supervisor管理gunicorn。

0x03 坑

部署python的web app还比较简单,网上有好多的资料,就是配置nginx处理好静态资源和转发好请求。最坑的是应用TF-IDF进行模型训练的时候很方便,关键代码如下:

vectorizer = TfidfVectorizer(min_df = 0.0, analyzer="char", sublinear_tf=True, decode_error='ignore',ngram_range=(1,3)) #converting data to vectors

X = vectorizer.fit_transform(queries)

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42) #splitting data

badCount = len(badQueries)

validCount = len(validQueries)

lgs = LogisticRegression(class_weight={1: 2 * validCount / badCount, 0: 1.0}) # class_weight='balanced')

lgs.fit(X_train, y_train) #training our model

predicted = lgs.predict(X_test)但是一移植就很麻烦,因为上面这段代码是在同一个文件中进行训练和预测,训练和预测最终都需要用到的TF-IDF的vocabulary是相同的,程序才能正常运行。而TF-IDF的vocabulary的形成需要点时间,也就是训练和预测都需要时间,但是部署的时候需要实时响应,所以不但需要将训练好的算法模型导出,同时也需要将TF-IDF使用的vocabulary导出(预测和训练用到的vocabulary必须相同,如果不同则不能正常运行),这样就可以在部署的时候直接导入模型和模型必备的TF-IDF的vocabulary,正常运行。关键代码如下:

voca=joblib.load('/root/ml/ml/l.sav').vocabulary_

vectorizer = TfidfVectorizer(min_df = 0.0, analyzer="char", sublinear_tf=True, decode_error='ignore',ngram_range=(1,3),vocabulary=voca) #converting data to vectors机器学习安全检测demo:http://4o4notfound.org:88/

0x04 参考文章

http://ytluck.github.io/program/my-program-post-22.html

http://www.jianshu.com/p/1200b78b9ab3

https://www.r-bloggers.com/lang/uncategorized/1579

http://python.jobbole.com/84355/

http://knightyang.com/2017/10/18/python%E7%AE%97%E6%B3%95%E6%A8%A1%E5%9E%8B%E5%B8%B8%E7%94%A8%E9%83%A8%E7%BD%B2%E6%96%B9%E5%BC%8F%E6%80%BB%E7%BB%93/